Testing business model innovation

Article two in a two-article series about design thinking and business model innovation with new technology.

In our work, we’ve often seen, that our clients and partners felt that the result of modelling the Value Proposition and Business Model Canvas’s was a depiction of the “reality” or, better but not still quite right, a description of the “plan” behind a product or service.

It’s important, though, to realize, that what you have, is a model. It is a somewhat tangible depiction of a concept or some business ideas. How well that model maps to “what could be”, is quite possibly very uncertain.

Read article one

Prototyping and business models

So, how do you take the next step? How do you find the best way of going forward? Many of us are unconsciously biased towards what we think is either the easiest or most interesting. We’ve seen that many entrepreneurs with an engineering background focus intently on the technical aspects and technical feasibility of a product or service. Other approaches, and maybe a bit more successful, are the ones that we have seen from the purely business-oriented teams where aspects like financial viability and market are some of the first aspects to be explored.

Lastly, the design-oriented teams will work a lot with the value proposition, testing and re-testing the usability and applicability of a solutions towards real and meaningful problems. While you can still get lucky and find all the right solutions – the risk of missing important aspects at the earliest and best possible time is big. One approach to overcome this, offered and explored in Strategyzer's new “Testing Business Ideas”, matched our own experience – and is now integrated in our own “From Idea to Business” methodology and will be described here, in this second part of the “Design Thinking and Business Model Innovation” small series.

Identifying your assumptions reveals your blind sides

What most of us brings with us, also when designing new products or services, are assumptions about how the “real world” functions. We might have great and well-founded theories – for example about physics, materials, prices, logistics and supply chains. But innovation is not about doing something that has already been done and proven. There will be assumptions and hypothesizes supporting your idea. Designers knows this – and this (as described in part one of this article series), forms the basis of design thinking; being able to identify what needs to be tested, figuring out how to test it – and then approaching the results of the tests critically and with a learning mindset.

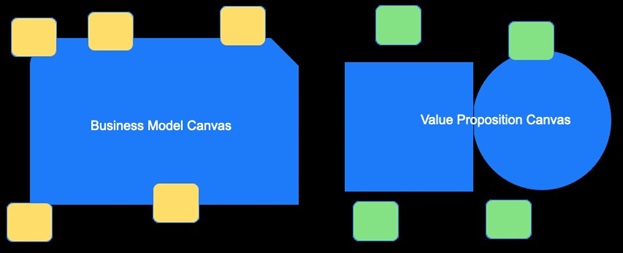

This approach has not been widely adopted in other fields, though, and especially not as a formalized and acknowledged way of modelling and testing business models. We’ve seen small steps in this direction, though. For instance, the “Lean Startup” (Eric Ries) approach contains a very similar approach with its “build, measure, learn” credo. What we gain with the approach described here, is a way to connect our models of our value proposition and business model to a prioritized list of important hypothesizes. These hypothesizes must then in turn be tested and validated. With a business concept, split in 9 parts for the business model and 6 parts for the value proposition model, you have a great way to look critically at your thinking and assumptions in all parts – also the ones not in your comfort zone.

Looking at your models, now is the time to pose the question to yourself and your team; what needs to be true, for this to work? Not only at a macro-level, but for each part in the value proposition and the business model. It’s difficult work – but important, as it gives your innovation team the best possible chances to identify potential problems at the earliest possible time. Figure 6 below illustrates how you can traverse your business model and value proposition canvas and identify assumptions.

In our experience, some of the basic assumptions that influence technology-driven innovation are assumptions like:

Viability

This can make us money – i.e. we have sufficient insights into the total cost of our operations and supply chain with this new technology.

Feasibility

We can build this – at scale with sufficient quality.

Desirability

The added cost or complexity involved still offers a value to our users and customers.

Mind you, the above assumptions are meta-level – what the assumption-exploration process allows you, is to dive deeper and detail these in respect to your concrete concept and model. We’ll dive a bit deeper into these in the following paragraphs – and end with a couple examples from the real world.

Find a path for validation through testable hypothesis

We all tend to be biased by our existing world view. The assumptions with which we function and navigate our everyday pervades everything we do – both as individuals, but also the company culture that we are a part of. Of course, the example above with the engineering team focusing on specs, the business team on the market and the design team on the users is an over-simplification - but not too far from the everyday truth of most companies. Therefore, this step – finding your assumptions and formulating hypotheses around them – is both very difficult and very important. Hidden in your assumptions you might find both the success and the spectacular failure of your new product or services.

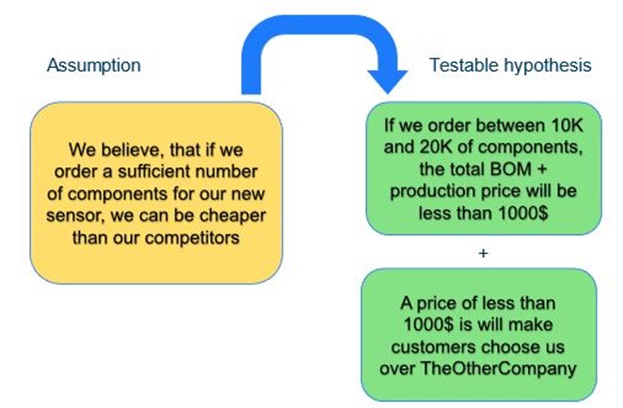

First thing to do, then, after your assumption mapping, is to look through each one, and formulate it as a testable, precise and discrete hypothesis.

- A testable hypothesis can be validated – i.e., either supported (validated) or refuted (invalidated) by some evidence. Do not mistake “supported” for “proven” in any scientific way, by the way. This is, for most, basically unobtainable. But you can have your assumptions either supported or refuted with varying degrees of certainty– and knowing the strength of your evidence, pointing in either direction is an important part of moving forward towards implementation (or dropping) your concept.

- A precise hypothesis has specified, in sufficient detail to be valuable, the subject, domain, amount and/or timing of the hypothesis. If details of the hypothesis are to broad, you will not get really useful or actionable data out of it.

- A discrete hypothesis doesn’t try to validate more than one thing at the time. It its concrete and directed towards a meaningful distinct aspect of your assumption. In this way, the results are less prone to discussion and interpretation. Sometimes, this means that you need to split up an assumption into several supporting hypothesis.

From our cases, here is an example:

Hopefully, you can see the difference here – and the value. By first finding your assumptions and then formulating hypotheses around them, gives you a unique chance to speed up some of the learnings you might otherwise only find after you’re introduced your product or service into the market. We all know by now, that “pivoting” is almost expected by investors and startups as something that will happen in every startup’s early life. Pivoting happens, when your assumptions about the market, your product or your users turns out to be wrong. This method gives you a chance to “pivot” cheaper, earlier, and faster.

Mapping and prioritising what is important and unknown

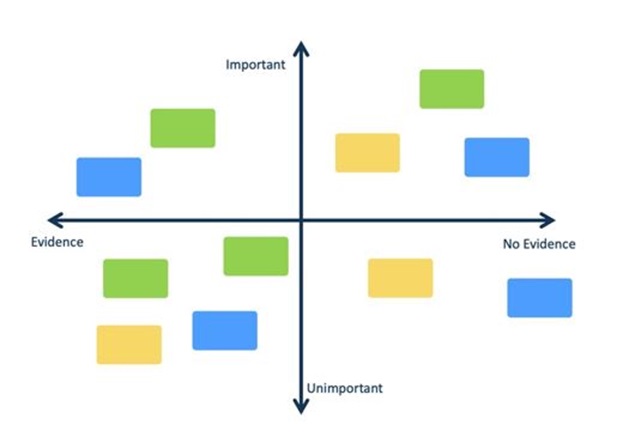

After the hypothesis formulation process, the next phase of your innovation journey needs careful planning; now you need to prioritise your assumptions. What’s important, what is less important? What do you already have evidence supporting? What is completely unknown. You can map this out, by taking your assumptions and map them in a two-by-two grid with importance over one axis, evidence strength over the other. This exercise will leave you with a pretty clear idea of what needs to be investigated – and what is less important.Continuous learning will make you better at business innovation

In this micro-series of two articles around design thinking and business modelling we’ve discussed the initial modelling part and now the testing part. After these two phases, an important learning part needs to follow. Often, testing and validating your model, you’ll get unexpected evidence that you need to consider – and you might have to go back to modelling. In these situations, take care, that what you bring back are real insights and let them inform changes and “pivots” the right way. In FORCE Technology, we’ve used concept-maps, test-result cards etc. as different ways to collect and facilitate our acting on the learnings. If you adhere to agile methodologies, you might know of “retro’s” or “closing the loop” sessions that are very similar in nature.

Ever since we started working with “electronic sketching” in the early 0’s, inspired by Bill Buxton and his work at Microsoft, we’ve known that experimentation, tinkering and prototyping combined with real-life testing and validation have been the best way to work with ideation and the early phases of product development. We started working more with business modelling as we saw how many, otherwise great, products and services failed in the market. From our own research, we saw a failure rate of almost 80% across product introductions with new technology – where 40% failed feasibility, and the rest in viability and desirability!

Diving deeper into the rabbit hole of business theories, Osterwalder et al.’s early work with the business model canvas, opened our eyes towards a way to extend the isolated product design with a way to model the entire business surrounding the product. With assumptions and tests-plans for business ideas, we have reached a holistic methodology, where we can cover more than “just” the desirability or feasibility of a product with new technology and now give new products and services the best possible chance to succeed in our competitive and rapidly evolving market.

Report: Prepare for the Digital Product Passport

/Article

Overview of requirements, challenges and opportunities in working with the Digital Product Passport.

Private cellular networks – challenges for deployments

/Article

White paper: Private cellular networks (4G, 5G) – challenges for wider commercial deployments.

IoT-enabled corrosion sensors for infrastructure

/Article

White paper on how corrosion sensors and batteryless solutions enable predictive maintenance.